For machine learning to have an impact, the healthcare industry must reimagine how it uses data.

By Shane Quint, Chief Technology Officer

Over the last few decades, one industry after another has been positively disrupted by data-driven technologies like big data and machine learning. The supermarket industry is currently tapping these technologies to deliver targeted marketing to loyal shoppers. Professional sports teams are using them to choose top draft picks and project success. By identifying better routes and transportation methods, data-driven technologies are already saving the logistics industry millions of dollars each year.

Yet while these technologies have disrupted almost every industry, they are still largely shut out of healthcare. Whether it’s the regulatory environment, workflow inefficiencies, or a lack of access to robust data, many arguments can be made for their slow adoption. However, recent regulatory moves such as the 21st Century Cures Act and the CMS’ Promoting Interoperability Rule have finally opened the door to data-driven healthcare.

With more opportunities available, how can healthcare capitalize on big data and machine learning innovations? By borrowing ideas that have been used in other industries for decades.

Borrowing from Retail: The Case for Multi-Variable Analysis in Healthcare

For big data to have an impact, the healthcare industry must reimagine its standard of evidence.

Traditionally, healthcare has followed the process of creating a hypothesis, changing one variable, and seeing if that variable affects the outcome. This has usually taken place in a controlled environment; but real life cannot be controlled like a lab. When it comes to a patient’s health, confounding variables abound.

A multi-variable analysis does the opposite. It examines all of the data to find a pattern in an unbiased fashion. This is where healthcare can learn from retail’s use of big data. Instead of analyzing pre-selected variables, retail has perfected the art of letting data tell a story.

Take grocery giant Kroger, for example. In the late 1990s, Wal-Mart’s advance into the grocery business prompted Kroger to get creative. The company began utilizing a category-management strategy to analyze point-of-sale and loyalty card data, which allowed it to stock its shelves more efficiently. This was a novel approach in the grocery industry, as previous merchandising decisions relied heavily on the manufacturer. Kroger’s decision to more effectively stock shelves by analyzing various data points and sources helped it significantly increase its margins in a low-margin business.

Kroger now uses almost 1,000 algorithms to keep closer tabs on its merchandise and shoppers. The company can determine whether someone is dieting, has children, or is retired, and can market to those customers accordingly. It frequently uses this data to implement tactics such as personalized coupons. According to The Wall Street Journal, some Kroger coupons have a redemption rate of 65%, compared with a national average of about 5%.

A New Scientific Method: Using Data Analytics to Connect Healthcare’s Pieces

The scientific method has proven invaluable in determining the efficacy of drugs in treating various diseases. Using this method, researchers discovered that the protein Merm1 could inhibit the uncontrolled cell growth typical of lung cancer. This discovery has since inspired the development of new treatments that may improve outcomes for lung cancer patients.

However, what happens when the disease is not caused by a single biomarker? Recent cancer research has found that cancerous tumors are often made up of many different genomic alterations, rendering a single treatment option ineffective.

Fortunately, the FoundationOne® blood assay has shown significant improvement in cancer treatment options for non-small cell lung cancer, colorectal-cancer, and breast cancer, among others. Their approach employs a multi-variable analysis to measure tumor-mutation burden. By assessing each gene in a tumor, the assay is able to determine the most effective therapy.

Medicine has begun to embrace the potential of multivariable analysis for more than cancer research. Zebra Medical Vision and others are currently using machine learning and artificial intelligence to determine diagnostic outcomes. This technology has also demonstrated the ability to perform as well as or better than a radiologist detecting strokes, or a clinician diagnosing wrist fractures.

Historically, machine-learning-algorithms have been designed to identify a single outcome, which is known as a classifier. The classifier is based on a training set, which often consists of data that points to a single conclusion. But what if the patient suffers from comorbid conditions as is often the case in mental health? Removing the classifier could provide a more comprehensive view of all the conditions a patient may be experiencing, while offering insights into the ailments of patients with similar characteristics.

Patient Similarity Analytics: Finding the Needle in the Healthcare Haystack

The strategy of comparing one or more patients to a similar patient group is known as the patient similarity approach. This concept has also been borrowed from the retail industry. Take Amazon’s recommendation engine, for example. By analyzing a buyer’s purchases and search history, and then comparing them to similar buyers, Amazon is able to predict their shopping habits and make targeted recommendations. According to McKinsey, a mind-boggling 35% of Amazon’s sales now come from such recommendations.

The Amazon recommendation engine applied to healthcare can find similar cohorts of patients without classifying the outcome. This patient similarity approach is a difficult pill to swallow as it challenges the medical research status quo, which has generally subscribed to using algorithms that predict single outcomes.

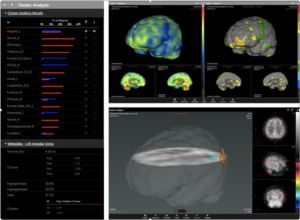

Similar to the Amazon recommendation engine, CereMetrix® Insights (currently under development) uses a patient similarity concept to analyze and identify the most important characteristics about a patient. Its unique approach not only establishes who looks similar to a specific patient, it also shows which characteristics are responsible for those relationships.

The engine calculates correlative relationships among characteristics such as atypical brain regions, symptomology, neurocognitive and psychiatric test scores, medication use and patient demographics to name a few. Understanding why patients are similar enables recommendations for additional tests or identifies risks, both of which would refine patient diagnoses and treatment paths.

Removing predetermined patient labels allows the data to communicate the patient’s story. In turn, the data can provide medical practitioners with the information they need to build more robust patient profiles. It’s a fast track to improved assessments, diagnoses, and treatments. Be prepared to see more machine learning technologies that aid the practitioner by reducing the art of medicine and increasing the science of medicine.

Shane Quint is Chief Technology Officer of CereMetrix®, a browser-based, workflow management tool for functional brain imaging analysis. Quint has developed machine learning technologies for companies such as Kroger, United Artists Theaters, The Shane Company, Level 3 Communications, US West and Colorado Prepaid Tuition.